Why Intelligent People Lose Disputes?

Litigation as a Subplot: Viewing the Court Case Within a Broader Conflict

A lawsuit is almost always just a single element of a much larger conflict. The real dispute—the one that exists independently of the courtroom—is often far broader than what the court actually addresses. It can involve a wider network of people, stem from historical tensions, or even generate entirely new disputes. Consequently, legal proceedings are usually just one of many battlegrounds—and frequently not the most critical one.

This dynamic is especially clear in corporate warfare. A shareholder often challenges a board resolution not because it is defective, but because blocking it stalls a hostile takeover or strengthens their bargaining power. The court is left analyzing arguments manufactured solely for the trial, which have little to do with the actual core of the dispute.

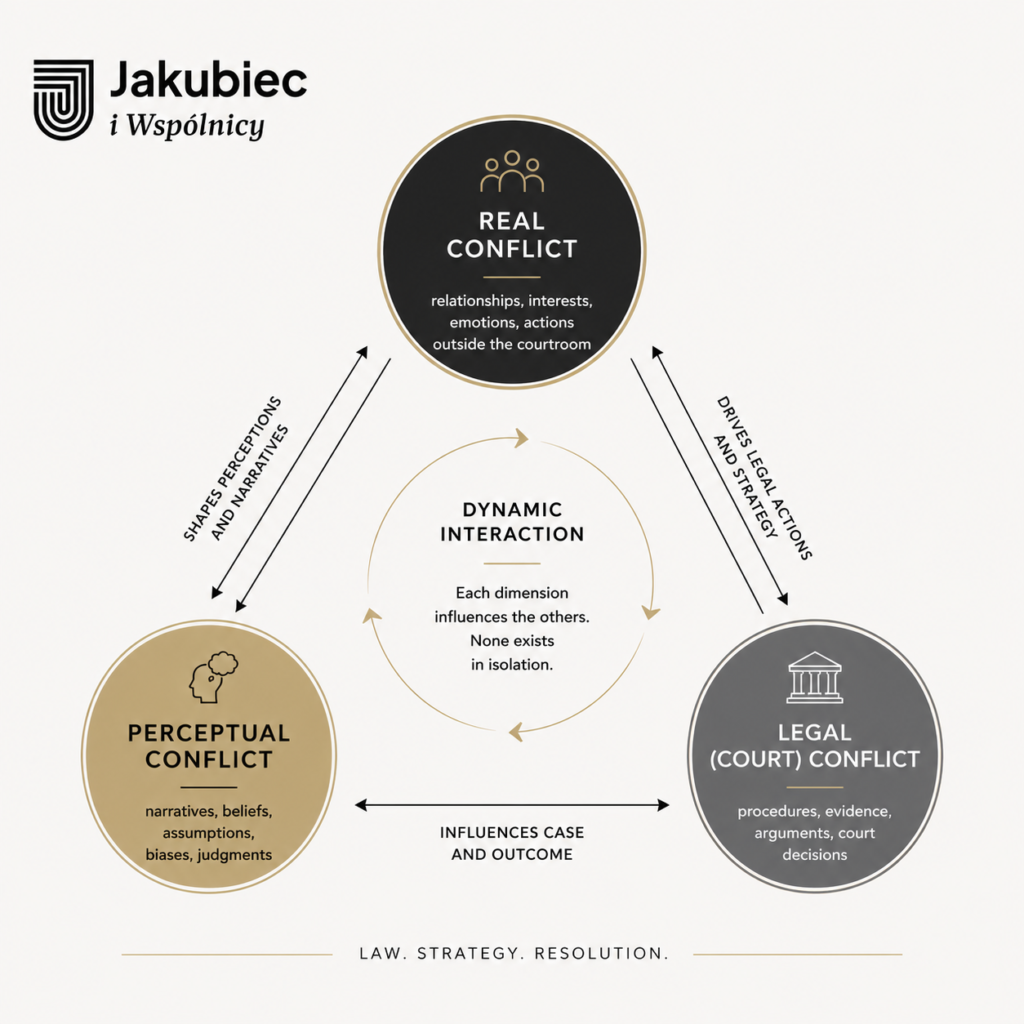

To bring structure to this chaos, let us recognize three distinct dimensions of conflict:

- 1. The Substantive – Real Conflict — what the battle is actually about.

- 2. The Perceptual Conflict — how each party subjectively views the situation.

- 3. The Legal / Procedural Conflict — what formally makes its way into the courtroom.

- Dynamic interaction between the three dimensions of conflict:

A single legal proceeding is often just one clash among many between the same or interconnected parties. The conflict simultaneously rages across other fronts: operational, communicative, reputational, familial, or financial.

Crucially, both sides can view the position of a given lawsuit on the “conflict map” entirely differently. The upper hand goes to the party whose map reflects reality more accurately—yet the perception of each actor is, at the same time, a structural element of that very reality.

Therefore, it is vital to remember: you can win the case and lose the conflict. You can also lose the case and achieve all your strategic goals. Even highly intelligent people routinely blind themselves to this distinction. It is a fatal error committed by corporate strategists, politicians, military commanders, lawyers, advisors, entrepreneurs, and spouses in crisis alike.

To illustrate this, let me share an example. I once handled a case involving two brothers who were partners in a limited liability company (sp. z o.o.). One of them maliciously blocked the other’s dividend payout, fully aware that his brother desperately needed the cash. The case went to a commercial court. Armies of lawyers, forensic accountants, and business valuation experts were brought in. Over time, it turned out that the brothers had simply had a massive falling out over who was supposed to host Christmas Eve dinner. The court could have litigated for ten years without ever touching the true essence of the dispute. Any formal judgment would have only deepened their conflict.

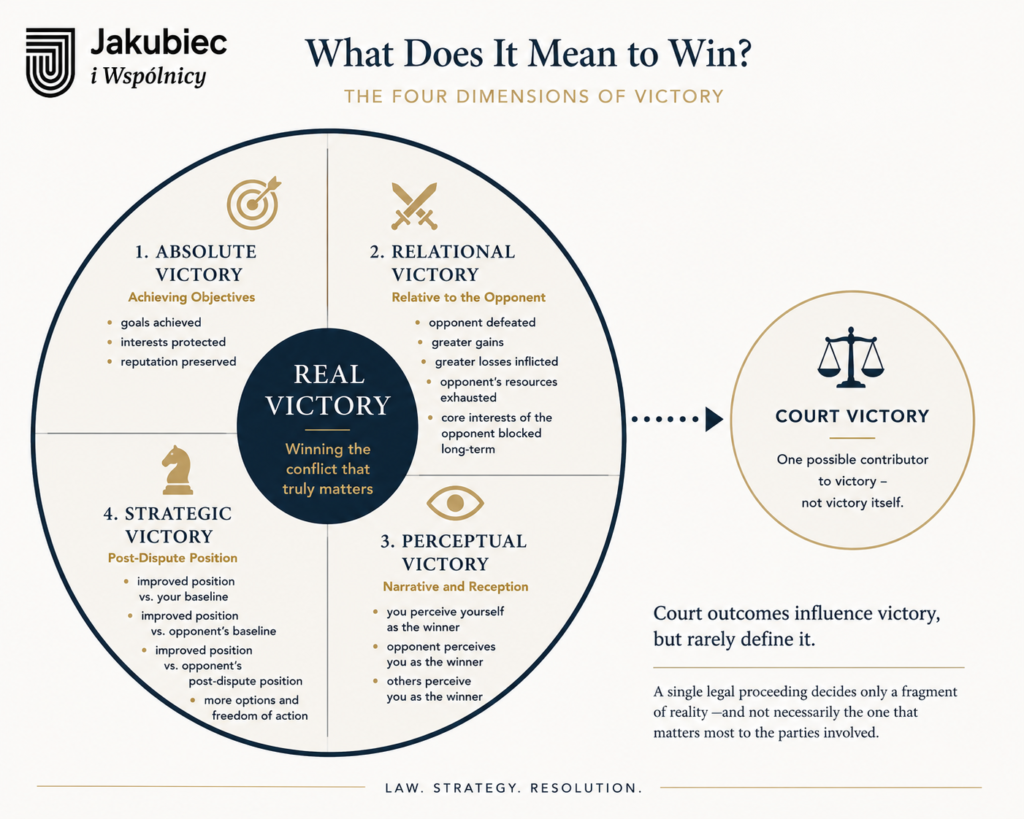

Redefining Victory: What Does It Actually Mean to “Win”?

What, then, constitutes victory? It is certainly not the mere act of winning a court case. If Pyrrhus had been a lawyer, he would have agreed with me without hesitation. For anyone interested in this subject, I highly recommend Thomas Schelling’s brilliant book, The Strategy of Conflict.

While I have presented a detailed exploration of how winning and losing are defined in a separate article, I will limit myself here to the most common understandings of victory. In practice, they can be divided into four distinct categories:

1. Absolute Victory — Achieving Personal Objectives

- You win if you achieve your original, baseline plans.

- You win if, post-dispute, you retain more options and opportunities to pursue your core interests.

- You win if you incur lower reputational costs.

2. Relational Victory — Outcome Relative to the Opponent

- You win if you defeat the opponent in a direct, head-to-head confrontation.

- You win if you extract more benefit than the other side.

- You win if you inflict heavier losses on the opponent than you sustain yourself.

- You win if you drive the exhaustion of the opponent’s resources.

- You win if you permanently prevent the opponent from achieving their core interests in the long run.

3. Perceptual Victory — Narrative and Reception

- You win if you subjectively perceive yourself as the winner.

- You win if your opponent perceives you as the winner.

- You win if external observers perceive you as the winner.

4. Strategic Victory — Post-Dispute Position

- You win if your relative position improves more significantly: a) compared to your baseline position, b) compared to the opponent’s baseline position, or c) compared to the opponent’s subsequent, post-dispute position.

As we can see, a single legal proceeding rarely guarantees victory in any of these categories. The court rules only on a single fragment of reality—and not necessarily the one that matters most to the parties involved. In divorce, corporate, or asset disputes, a court may decide a crucial matter, but just as often, it touches upon only one of many threads, completely disconnected from what determines a real win or loss.

Furthermore, the outcome of a dispute can be evaluated entirely differently by various individuals. This divergence typically stems from:

- The application of different criteria for success;

- Access to asymmetrical information; or

- Discrepancies in the time horizon through which the consequences are viewed.

The Real Reason Why Smart People Fail

This is exactly why intelligent people lose so often. Driven by sheer determination, they execute actions that:

- Either cannot logically lead to their intended goal,

- Or the goal itself was defined incorrectly and fails to improve their overall position,

- Or they concentrate heavily on the least significant aspect—such as a relational victory (the need to be deemed the winner), which in practice yields a profound strategic defeat.

Yet, this very discrepancy can be useful. It allows parties to save face—which is frequently the ultimate psychological prerequisite for accepting a factual defeat.

The Anatomy of Failure: Why Smart People Lose in Court

Failure stems from various causes. However, before we dissect them, it is worth noting something crucial: not all failure is inherently bad. Sometimes, a loss closes a flawed alternative and forces a course of action that proves highly beneficial in the long run. Certain failures function merely as a system correction mechanism—painful, yet necessary.

However, if we want to understand why highly intelligent people fail, we must map the root causes of failure across the three dimensions of conflict: the real, the perceptual, and the legal. Most importantly, we must expose the specific errors characteristic precisely of intelligent individuals.

Table: Why Highly Intelligent People Fail Across the Three Dimensions of Conflict?

| Dimension of Conflict | Specific Failure Pattern | Why Smart People Are Especially Vulnerable |

|---|---|---|

| Real Conflict | Overconfidence | Intelligent individuals overestimate their ability to predict the behavior, intentions, and thresholds of other actors. |

| Real Conflict | Illusion of Completeness | They construct coherent, elegant narratives from incomplete data because their minds refuse informational gaps. |

| Real Conflict | Elegance Bias | They prefer intellectually satisfying solutions over those that are operationally effective. |

| Real Conflict | Planning Fallacy | They underestimate time, cost, friction, and opponent counter‑moves due to excessive trust in their own planning ability. |

| Perceptual Conflict | Narrative Capture | They become prisoners of their own internally coherent story, which eventually outweighs the actual facts. |

| Perceptual Conflict | Confirmation Bias 2.0 | They do not merely seek confirmation — they actively engineer it through sophisticated rationalization. |

| Perceptual Conflict | Self‑Justification | Their intelligence makes it harder to admit misjudgment, leading to escalation rather than correction. |

| Perceptual Conflict | Misreading the Audience | They overestimate how much others care about the conflict, misjudge stakeholder investment, and misread reputational stakes. |

| Legal Conflict | Legal Tunnel Vision | They equate legal correctness with strategic victory, misunderstanding the limited role of law in a dynamic conflict. |

| Legal Conflict | Overengineering Arguments | They overcomplicate and over‑refine arguments, losing sight of what actually persuades a judge. |

| Legal Conflict | Misreading the System | They treat the court as a logical machine rather than a human institution with its own constraints and dynamics. |

| Legal Conflict | Cost Blindness | Convinced of the righteousness of their cause, they ignore financial, emotional, reputational, and temporal costs. |

1. Real Conflict — Flaws in Reality Among Intelligent Minds

It is at this foundational level that intelligence most frequently becomes a trap. This is not because smart people think poorly, but rather because they think too well, and their minds refuse to tolerate ambiguity.

- 1.1. Overconfidence — Overestimating Predictive Capabilities Intelligent people deeply believe they can accurately predict the behavior of other participants in a conflict. This illusion invariably leads to flawed strategic choices.

- 1.2. Illusion of Completeness — Constructing Coherent Narratives from Incomplete Data The smarter an individual is, the more effortlessly they craft beautiful, logical explanations to fill information gaps. The problem is that these narratives, while intensely compelling, are often entirely false.

- 1.3. Elegance Bias — Choosing Elegant Solutions Over Effective Ones Intelligent people have a strong tendency to select courses of action that are logical, aesthetic, and intellectually satisfying—yet do not necessarily work in practice. In litigation, elegant solutions often take the form of sophisticated, academic legal theories, while effective solutions are frequently simple, raw, and tactical.

- 1.4. Planning Fallacy — Underestimating Time, Costs, and Friction The more someone trusts their own planning capability, the more they blind themselves to random variables, procedural delays, opponent counter-moves, and collateral costs. This is a direct path to strategic disasters.

2. Perceptual Conflict — Flaws in Narrative Among Intelligent Minds

This is the most elusive and treacherous plane. Here, intelligence transforms into the ultimate trap, inadvertently triggering a dangerous spiral of escalation.

- 2.1. Narrative Capture — Becoming a Prisoner of One’s Own Story The more intelligent an individual is, the more easily they manufacture an internal narrative that perfectly justifies their decisions, explains the opponent’s moves, and imposes order onto chaos. Eventually, this narrative becomes more vital to them than the actual facts.

- 2.2. Confirmation Bias 2.0 — Intelligent Rationalization Smart people do not merely seek confirmation for their assumptions; they actively engineer it, brilliant at rationalizing reality to fit their preconceived thesis.

- 2.3. Self-Justification — Defending the Ego The higher the intelligence, the harder it is to admit a miscalculation—to acknowledge a misread situation, a poorly chosen objective, or a failure of one’s own making. Prioritizing ego over core interests always accelerates escalation.

- 2.4. Misreading the Audience — Flawed Stakeholder Assessment Intelligent individuals frequently overestimate how deeply external parties care about the conflict, how heavily invested the opponent truly is, or how severely their own reputation is at stake. Consequently, they deploy defensive tactics that serve no strategic purpose.

3. Legal (Court) Conflict — Flaws in Procedure Among Intelligent Minds

This is the arena where intelligent people believe most blindly in the power of their intellect. Paradoxically, it is precisely why they suffer their most devastating defeats here.

- 3.1. Legal Tunnel Vision — Equating Legal Correctness with Strategic Victory A classic delusion: assuming that if you have the law on your side, if your argument is crystal-clear in its logic, and if the statutes support you, you must win. In reality, strict legal correctness is often strategically useless. The most dangerous error is not misunderstanding the law itself; it is misunderstanding the limited role that law plays within a larger, dynamic conflict.

- 3.2. Overengineering Arguments The smarter the individual, the more they complicate, over-expand, and refine their arguments, completely losing sight of the simple, raw points that actually persuade a judge.

- 3.3. Misreading the System — Treating the Court as a Logical Machine Intelligent people often refuse to accept that the court does not operate like their own mind, that legal procedure is not a purely intellectual tool, and that a judge is rarely an audience for idealized, academic discourse.

- 3.4. Cost Blindness — Ignoring Collateral Damage Blinded by the righteousness of their cause, smart individuals stop calculating real transactional costs: financial depletion, emotional fatigue, reputational hits, and the immense cost of lost time.

Failure’s Anatomy Summary

The Anatomy of Failure: A strategic mapping of the 12 behavioral and procedural traps that lead high-IQ individuals and enterprises to catastrophic defeats across the real, perceptual, and legal dimensions of conflict.

Highly intelligent people do not lose because they lack capability; they lose because they become overconfident in the products of their own thinking. They construct logical, elegant models of conflict that work perfectly in their heads but disintegrate in reality. They spin narratives that protect their ego rather than their enterprise. In court, they focus obsessively on legal victory while remaining entirely blind to strategic defeat.

To win, one must first accept that the real, perceptual, and legal systems operate by an entirely different set of rules than those dictated by our own intelligence. Smart people often hire lawyers who resemble themselves — analytical, academic, theoretical — instead of those who actually win trials.

Shifting the Odds: How to Increase Your Chances of Winning

To provide a meaningful answer to how one can increase the chances of winning, we must maintain our core distinction between the three dimensions of conflict. Addressing this question within the Real and Perceptual dimensions—where battles involve complex psychological warfare, market dynamics, and reputational chess—is far too vast a subject for this chapter. Therefore, I will deliberately set those two layers aside for now and focus exclusively on the tactical mechanics of the Legal (Court) Conflict, specifically within the unique and challenging reality of the Polish judicial system.

In Polish litigation, raw intelligence and a sense of moral entitlement are rarely enough. To navigate the procedural rigidity and systemic unpredictability of Polish courts, a smart strategist must adhere to nine fundamental principles:

1. Enter the Courtroom Only When Absolutely Necessary

The Polish judicial system is notoriously overburdened, slow, and formalistic. Litigation should never be your first impulse; it must be your last resort. Treat the decision to file a lawsuit like a declaration of war—an expensive, exhausting measure deployed only when all alternative strategic options, leverage points, and non-judicial mechanisms have been completely exhausted.

2. Master Both the Facts and the Legal Interpretation

Polish civil and commercial procedures are deeply unforgiving of preparation gaps. You must achieve absolute command over two fronts before the first gavel falls:

- The Evidentiary Base: Establish an airtight, chronological map of undeniable facts supported by robust documentary evidence.

- The Legal Theory: Secure a bulletproof, precise interpretation of the law. In a system where precedents are persuasive but not strictly binding, your legal framework must leave no room for arbitrary interpretation.

3. Rigorously Account for Judicial Risk (Ryzyko Procesowe)

In Poland, “judicial risk” is a structural reality. Different senates or divisions within the exact same court can interpret identical regulations in wildly contrasting ways. Never plan for a best-case scenario. A brilliant strategist calculates the probability of systemic inconsistency, unexpected changes in jurisprudence, and the subjective disposition of the adjudicating judge. If your strategy cannot survive a hostile or unpredictable judicial turn, it is a bad strategy.

4. Select a Top-Tier Trial Advocate

Do not hire an academic or a theorist for a street fight. You need an experienced, highly tactical litigator (adwokat or radca prawny) who understands the gritty reality of Polish courtrooms. A great advocate does not just know the codes; they know how to read the judge, how to react dynamically to unexpected procedural maneuvers by the opponent, and how to deliver surefire, persuasive arguments under extreme time pressure.

5. Secure the Capital Required to Sustain the Siege

Litigation in Poland is rarely a blitzkrieg; it is almost always a war of attrition. Between the initial filing, the exchange of extensive pleadings, delays in scheduling hearings, and the inevitable appellate process, a case can easily drag on for years. You must secure and isolate the necessary financial resources upfront. Entering a legal dispute with a tight budget is a fatal vulnerability; running out of capital midway through a trial forces catastrophic settlements.

6. Construct Razor-Sharp Evidentiary Hypotheses (Tezy Dowodowe)

Under current Polish procedural law, preclusion rules are exceptionally strict. You cannot simply throw a mountain of documents at a judge and hope they find the truth. Every single piece of evidence, every witness, and every expert report must be accompanied by a meticulously drafted, precise evidentiary hypothesis (teza dowodowa). You must clearly state exactly what a specific piece of evidence proves and why it is legally relevant to the core layout of the case. Loose, vague motions will be ruthlessly dismissed by the court.

7. Never Treat the Trial as an End in Itself

The courtroom is not a theater for personal vindication or academic debates. A lawsuit is merely a highly specialized instrument within your broader business or personal framework. Always keep your eyes on the ultimate strategic outcome. If a specific procedural victory does not improve your real-world position, protect your assets, or open up new opportunities, it is an expensive distraction. Never sacrifice your enterprise to win a point of law.

8. Remember that Witnesses and Court Experts Are Only Human

Smart people often expect the court to behave like a flawless, data-driven machine, but it is staffed entirely by human beings.

- Witnesses are deeply unreliable: they forget crucial details over time, perceive events through biased lenses, get confused under cross-examination, or cave under psychological pressure.

- Court-Appointed Experts (Biegli Sądowi)—who carry immense weight in Polish litigation—are also susceptible to human flaws. They can be overworked, deliver superficial or deeply flawed opinions, succumb to professional inertia, or struggle to grasp highly modern business models. Your strategy must always build in a margin of safety for human error and cognitive bias.

9. Run Parallel Negotiations — The Courtroom Door Is Never Locked

A highly sophisticated strategist understands that litigation and negotiation are not mutually exclusive; they are complementary tracks. The fact that you are fighting fiercely inside the courtroom should never stop you from talking outside of it. Parallel negotiations can run continuously, addressing not only the narrow legal dispute itself but also all the broader, structural elements of the conflict that the court is legally blind to. Quite often, a well-executed, aggressive lawsuit is the exact catalyst needed to force a stubborn opponent into a highly favorable settlement.

Litigation is never the battlefield — it is only the visible fragment of a much larger strategic landscape.

Table: Nine Principles for Increasing Your Chances of Winning in Polish Litigation

| Principle | Core Idea | Strategic Rationale |

|---|---|---|

| Enter the Courtroom Only When Necessary | Litigation must be a last resort, not a first impulse. | Polish courts are slow, overloaded, and formalistic; premature litigation destroys leverage and drains resources. |

| Master Facts and Legal Interpretation | Achieve total command over evidence and legal theory. | Polish procedure punishes gaps; only airtight facts + precise legal framing survive judicial scrutiny. |

| Account for Judicial Risk | Build a strategy that survives inconsistent jurisprudence. | Identical cases can be decided differently; planning for unpredictability is mandatory. |

| Select a Top‑Tier Trial Advocate | Choose a tactical litigator, not an academic. | Winning requires courtroom instincts, judge‑reading, and rapid tactical adaptation. |

| Secure Litigation Capital | Prepare financial reserves for a multi‑year siege. | Running out of funds mid‑trial forces catastrophic settlements and strategic collapse. |

| Construct Razor‑Sharp Evidentiary Hypotheses | Every piece of evidence must have a precise, articulated purpose. | Strict preclusion rules eliminate vague motions; only targeted evidence survives. |

| Never Treat the Trial as an End in Itself | Court victories matter only if they improve real‑world position. | Procedural wins without strategic value are expensive distractions. |

| Expect Human Fallibility | Witnesses and experts are unreliable, biased, and inconsistent. | Polish courts rely heavily on human testimony and expert opinions — both structurally fallible. |

| Run Parallel Negotiations | Litigate and negotiate simultaneously. | Court pressure often unlocks settlements; negotiations address dimensions the court cannot see. |

Conclusion

Winning in the legal arena demands far more than raw intelligence or an airtight legal argument. As we have dissected, high-IQ individuals and sophisticated corporate actors routinely suffer catastrophic defeats not from a lack of capability, but because they fall prey to their own cognitive biases—becoming captive to elegant models, misreading human fallibility, and confusing strict legal correctness with overarching strategic victory.

Ultimately, a court case is never a standalone battle; it is merely a single subplot within a much larger, dynamic conflict. To tilt the scales in your favor—especially within the rigid and unpredictable landscape of Polish litigation—you must discipline your mind to look beyond the courtroom doors. You must balance aggressive procedural tactics with cold, objective reality, recognize the human limitations of the system, and never stop negotiating outside the courtroom. True victory belongs to those who refuse to let their ego dictate their strategy, and who understand that the ultimate goal is not merely to win a point of law, but to protect and advance their real-world enterprise.

Call to Action

When the stakes are high, you cannot afford to rely on legal correctness alone. If your enterprise is facing a complex corporate, commercial, or asset dispute, you need more than just a firm that files pleadings—you need a partner who maps the entire conflict.

Let us dissect the reality of your dispute before the system dissects it for you.

Contact Jakubiec i Wspólnicy today to schedule a strategic consultation. Together, we will look beyond the legal subplot, neutralize cognitive traps, and engineer a path to real, strategic victory.

FAQ

Q1: If I have a 90% chance of winning a case legally, shouldn’t I push forward to a judgment?

A: Legally, yes; strategically, it depends entirely on what that judgment will cost you in the Real and Perceptualdimensions of the conflict. In Polish commercial disputes, a multi-year trial can drain your management’s time, exhaust financial resources, and paralyze business operations. If a 90% legal victory results in a 100% reputational disaster or leaves your enterprise financially depleted, it is a net strategic defeat. Always weigh the transaction costs against the real-world value of the judgment.

Q2: Why does high intelligence make corporate leaders more vulnerable to legal traps?

A: High intelligence is an asset, but without behavioral discipline, it breeds Overconfidence and Elegance Bias. Brilliant minds refuse informational gaps, so they construct beautifully logical, internally coherent narratives (Illusion of Completeness) that explain the conflict perfectly—in their heads. They often fall in love with sophisticated legal theories rather than simple, raw, tactical moves. They lose because they become captive to the perfection of their own models, failing to realize that the courtroom is a human institution, not a logical machine.

Q3: How do you negotiate with an opponent while simultaneously fighting them fiercely in court?

A: By treating litigation not as an emotional vendetta, but as a dynamic leverage generator. Filing a precise, aggressive lawsuit changes the opponent’s calculus, escalates their Cost Blindness, and directly attacks their Perceptual stability. You do not negotiate out of weakness; you use the procedural pressure created inside the courtroom as the exact catalyst to force a rational, structured conversation outside of it. The courtroom door is never locked.

Q4: Court-appointed experts (Biegli sądowi) are professionals. Why do you label them as a systemic risk?

A: Because they are human beings operating within a heavily burdened system. In Polish litigation, experts carry immense structural weight, yet they frequently suffer from professional inertia, severe overwork, or a lack of familiarity with highly modern, fast-paced business models. An expert can misread data, deliver a superficial report, or succumb to cognitive bias. A sophisticated legal strategy must always factor in this margin for human error and include targeted, razor-sharp evidentiary hypotheses to steer the expert’s focus precisely.

Q5: What is the difference between winning a “case” and winning a “conflict”?

A: A court case is merely a highly formalistic subplot. Winning a case means obtaining a favorable ruling on a specific, narrow legal claim (e.g., overturning a corporate resolution or enforcing a single contractual clause). Winning a conflictmeans protecting your long-term baseline, expanding your future strategic options, and advancing your core enterprise interests. If your legal victory does not improve your real-world position, you have simply mastered the procedure while failing the strategy.

Cognitive Traps and Their Impact on Decision‑Making in the Fogg Model

In several previous texts, I presented some cognitive biases (thinking traps), including the fundamental attribution error, tunnel vision, and my original concept of the Coupled Confirmation Bias. I wrote about them mainly in the context of their impact on the dynamics of conflict, which I observe in my daily work. Now I want to take a step further and show how these same mechanisms influence the decision‑making process in the Fogg model.

To move forward, I introduce a set of tools I’ve developed myself — three decision’s parameters and eight resulting decision types. In the next section, I walk through how these elements interact and why they matter. This framework is entirely my own creation; I find it promising and intuitively useful, though it still needs to be tested in practice. For now, it remains a proposal — and I state that openly.

What Are Cognitive Biases?

Cognitive biases are, in other words, errors or traps in thinking. The term was popularized by Daniel Kahneman. This outstanding psychologist published the book Thinking, Fast and Slow, in which he described mechanisms that affect all of us. Not because something is wrong with us. These mechanisms serve important functions. They simplify many matters. They allow us, for example, to conserve energy or solve a given problem well enough to move on to the next one.

But in complex social relationships, they cause us to misjudge reality, create false narratives in our minds, and ultimately make poor decisions.

Which Cognitive Biases Do We Know?

There are many cognitive biases, and we have probably not discovered all of them yet. Here I will briefly present only a few:

- Confirmation bias

- Fundamental Attribution Error

- Status quo bias

- Sunk costs fallacy

- Coupled Confirmation Bias – my original concept (and its extension), which is only a hypothesis and requires further development and empirical validation.

- Tunnel vision, which is not a cognitive bias in itself, but a systemic mechanism.

The impact of cognitive biases on decision‑making — for example, in the context of reaching agreements — is the subject of extensive scientific research. Some of them are considered inhibiting, others reinforcing. This distinction is useful for drawing further conclusions.

Below I will present, in order:

- the mode of decision‑making in the Fogg model, and

- the parameters of a decision once it is made.

Only then will I show how selected cognitive biases can influence both whether we make a decision at all, and the content of that decision.

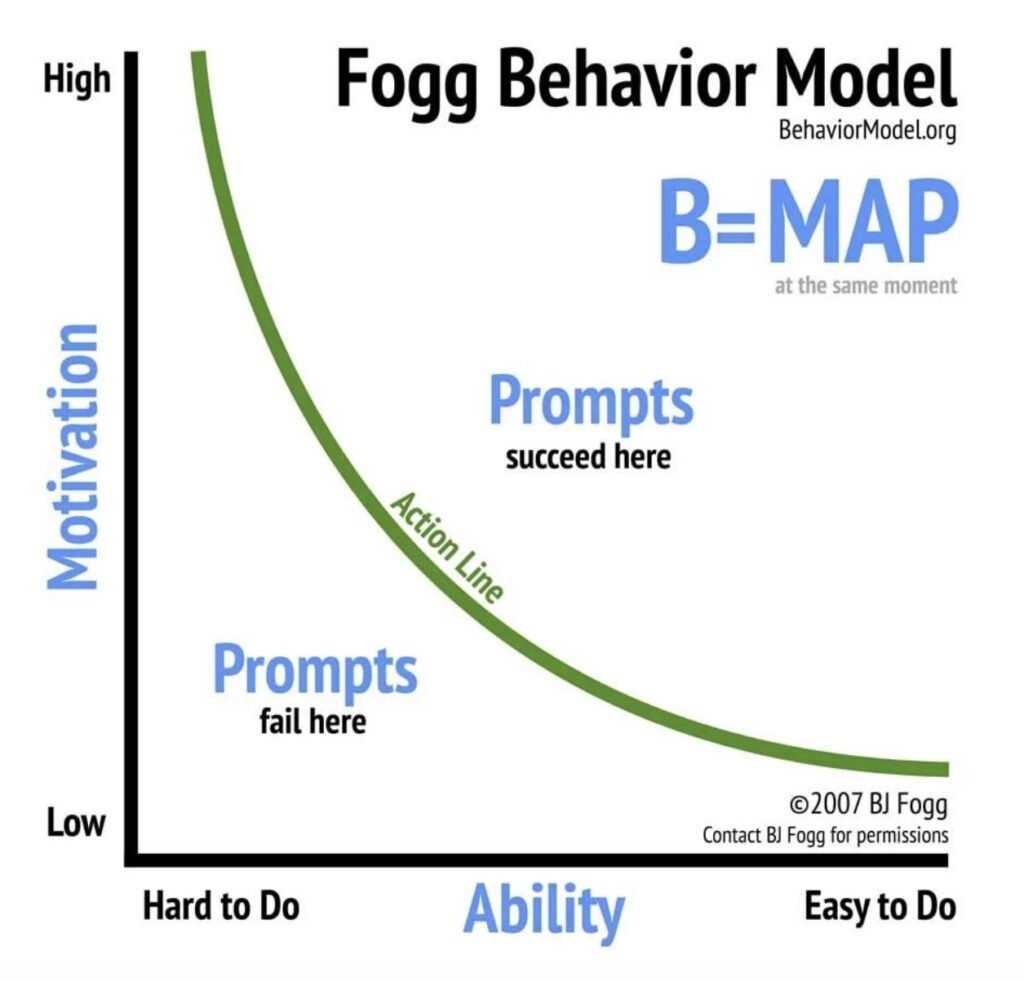

The Fogg Decision‑Making Model

The Fogg model describes human behavior as the result of three interacting components: motivation, ability, and a trigger. A behavior — including a decision — occurs only when all three appear at the same moment.

This means that even if a person wants to make a decision (motivation). And even if they can make it (ability), the decision will not happen without a trigger. Conversely, even a strong trigger will not work if motivation is too low or the action feels too difficult.

In practice, this model explains why people in conflict often remain stuck in indecision, delay key steps, or choose actions that are irrational from the outside. Their internal configuration of motivation, ability, and triggers is disrupted — and cognitive biases play a decisive role in that disruption.

Let us remember that, for a decision to occur, all three elements —

- motivation,

- ability, and

- trigger — must appear together. I have already discussed this in detail in a previous text.

Now I will pose a question:

What Is “Ability” in Fogg’s Framework?

I understand ability as a property whose characteristics are better captured by the word feasibility. I did not elaborate on this aspect in the previous article, so I will do it now.

In the Fogg model, feasibility — in my interpretation — is the resultant of two subjectively perceived factors:

- one’s own capabilities, and

- the difficulty of the task.

Only the decision‑maker’s perception matters. Of course, they may misjudge the situation due to a cognitive error or faulty data. Interestingly, such an error may ultimately lead to a beneficial decision.

Imagine that I have incomplete or inaccurate data. Acting under the influence of a logical or cognitive error, I draw incorrect conclusions from them. Those conclusions would be considered correct if I had access to complete or accurate data — and if I were not acting under the influence of error.

This can be summarized in one sentence: Fogg’s triad influences the act of decision‑making, which is not identical with the way the decision is executed. The manner of executing a previously made decision is described by decision parameters (discussed below).

What Are the 8 Types of Decisions?

I propose that decisions analyzed through the lens of their execution should be assigned three parameters. These parameters determine how the decision is carried out. The three decision parameters I propose below allow me to distinguish eight types of decisions.

In the following section, I present how each configuration combines to form these eight decision types. This is my original concept, which I find highly useful, though it naturally requires further testing. For now, it remains solely my own proposal, which I state explicitly.

My proposal of 3 Decision’s Parameters

The three decision’s parameters are: vector, dynamics, and determination (I am considering whether momentum might be a better term).

1. Vector

Its reference point is the current state. Its value is 0 or 1. Let 0 denote a tendency to remain in the existing arrangement, and 1 a drive toward change.

2. Dynamics

Let us distinguish two values of dynamics: (+) and (–), where (+) means that the decision results in action, and (–) means passivity.

3. Determination

Let us define two levels: (L) and (H), where (L) stands for low determination, and (H) stands for high determination.

Vector expresses the attitude toward the current state and its change. A decision to defend the status quo or to alter it may be realized — depending on circumstances — through passivity or action (dynamics + or –). Determination is a function of readiness to engage, which I understand as the resultant of:

- willingness to bear costs (financial, reputational, organizational, energetic, or even biological), and

- tolerance of risk.

Vector, dynamics, and determination form a simplified heuristic model created by me (at least I am not aware of any publications that use these parameters — apart from the previously mentioned inhibiting and reinforcing biases). Its usefulness certainly requires further research — for now, it remains a hypothetical model.

I also emphasize that the human psyche is not mathematics — yet paradoxically, mathematics allows us to understand the psyche better.

Table 1: The Three Decision’s Parameters

| Decision Parameter | Parameter Value | Description and Meaning of the Parameter |

|---|---|---|

| Vector | 0 | Indicates a tendency to maintain the current state. The decision‑maker interprets the situation as one in which it is better to remain with the status quo. |

| 1 | Indicates a drive to change the current state. The decision‑maker concludes that the existing arrangement requires modification or abandonment. | |

| Dynamics | + | A decision executed through action. It means actively doing something intended to maintain or change the state. |

| – | A decision executed through passivity. It means refraining from action as a way of achieving the goal (maintaining or changing the state). | |

| Determination | L | Low determination. Indicates limited willingness to bear costs and low risk tolerance. The decision is weak and easily altered. |

| H | High determination. Indicates a strong willingness to bear costs (financial, emotional, organizational, biological) and high risk tolerance. The decision is strong and stable. |

Using the three parameters listed above allows us to distinguish eight types of decisions.

Table 2: Eight Types of Decisions

| Decision Type No. | Vector | Dynamics | Determination | Description of the Decision | Example |

|---|---|---|---|---|---|

| 1 | 0 | – | L | A decision to maintain the status quo through passivity with low determination | I decide to sleep a bit longer |

| 2 | 0 | – | H | A decision to maintain the status quo through non‑action with high determination | Sitting on a tree, I decide not to move so I don’t fall |

| 3 | 0 | + | L | A decision to maintain the status quo through action with low determination | I decide to shoo away the cat that is waking me up |

| 4 | 0 | + | H | A decision to maintain the status quo through action with high determination | I defend my daughter from an attacker |

| 5 | 1 | – | L | A decision to bring about change through passivity with low determination | I don’t water flowers I dislike so they will wither |

| 6 | 1 | – | H | A decision to bring about change through passivity with high determination | I decide not to save someone when I want them to drown |

| 7 | 1 | + | L | A decision to bring about change through action with low determination | I want to trim the cat’s claws |

| 8 | 1 | + | H | A decision to bring about change through action with high determination | I want to escape from prison |

Jakubiec eight types of decisions

Let us add that:

- decisions with vector 0 (maintaining the status quo), and

- decisions with negative dynamics (–), i.e., achieving the goal through passivity,

do not in any way mean the absence of a decision. These are not the same. I may decide to sit quietly and remain motionless so that someone does not find me. That decision is not the same as externally observed passivity caused, for example, by apathy or an ambivalent attitude toward the situation.

Similarly, it must be noted that:

- a decision aimed at change (Vector 1) cannot be equated with action (+), and

- a decision aimed at defending the status quo (Vector 0) cannot be equated with the absence of action.

The initial state (status quo) may be so desirable that we decide to defend it actively (vector 0, dynamics +). Thus, not wanting change, we will take action. Example: If I want a drowning person to survive, I will rescue them. Not wanting to allow a change (life → death), I will take action.

Conversely, it may happen that in striving for change we decide not to act. If I want a drowning person to die, it is enough that I do not rescue them. Drowning represents a change in their state (life → death) brought about by my passivity. Here we have vector 1, negative dynamics, and a level of determination which, in this example, we do not know — but we assume it would have to be very high.

For completeness, it must also be stated that the absence of a decision to change is not identical with a decision to maintain the status quo. A lack of decision results from the absence of at least one element of the decision triad (see above). Thus, the absence of a decision may result from a lack of motivation, a subjectively perceived lack of feasibility, or the absence of a trigger. This does not necessarily mean that the stimulus is irrelevant to us. It may, for example, generate motivation, but we will not make a decision because the task appears unfeasible. In such a situation, the objective absence of action cannot be equated with passivity as a chosen parameter of a made decision.

Example

When encountering a bear in the mountains, I may decide either to surrender or to save my life. But whether I achieve this by playing dead, fighting, or running — that is a parameter of the decision. And in this respect, it may be chosen correctly or incorrectly: I may run up a tree the bear can climb after me, or hide in a rock crevice where it cannot reach me. This does not change the fact that I decided to stay alive (vector 0) through action in the form of escape (dynamics +) or through passivity in the form of playing dead (dynamics –), with high determination in each case (H).

As I indicated above, one must distinguish between lying down because I consciously chose to survive by playing dead, and lying down because I concluded that I have no chance anyway, and besides, I have not wanted to live for a long time.

What Is the Relationship Between Cognitive Biases, the Decision Triad, and Decision Parameters?

Above, I outlined three areas: cognitive biases, the decision triad, and the decision parameters. A natural question arises: how can these elements relate to one another?

Let us begin with the clarification that there is no determinism here. Cognitive biases do not determine a given decision, but they significantly increase the tendency — they “pull” in a particular direction. I also note that one may simultaneously remain under the influence of two or more biases, originating from different sources, whose effects intersect at a given moment, each “pushing” the decision‑maker in a different (or the same) direction.

The Influence of Cognitive Biases on the Decision Triad (the Act of Making a Decision)

As indicated above, cognitive biases may act at every stage and level of decision‑making and decision execution. It may turn out that when the decision triad is activated, one bias becomes influential, and when setting the parameters of execution, we operate under the influence of another. It may also happen that two biases act simultaneously, and their mutual relationship is positive (they act in the same direction), partially opposing, or entirely opposing. Most often, however, we will remain under the influence of one of them.

Let us assume that cognitive biases may influence:

- the emergence of motivation by affecting the evaluation of the stimulus that generates emotion, and consequently the direction or strength of motivation;

- the subjective assessment of feasibility;

- susceptibility to a trigger.

Let us also assume that, with respect to each element of the decision triad, the influence of a cognitive bias may be reinforcing or weakening.

Motivation

A cognitive bias may influence the very existence of motivation (trigger it or extinguish it), and may strengthen or weaken existing motivation.

Perception of Feasibility

A cognitive bias may influence the assessment of feasibility and make the sense of feasibility stronger or weaker (both by affecting the perception of one’s own capabilities and the subjectively perceived difficulty of the task itself).

A distorted assessment caused by a cognitive bias may therefore result in:

- evaluating a task as feasible when it is not feasible;

- evaluating a task as unfeasible when it is feasible.

and consequently:

- making a decision when the goal is “desirable” but objectively unattainable (lack of feasibility);

- not making a decision when the goal is objectively attainable and desirable.

Trigger

With respect to the trigger, a cognitive bias may strengthen or weaken its effect. This means that, at a sufficient intensity of cognitive distortion:

- certain cognitive biases may interpret as a trigger a factor that, under other circumstances, would not be interpreted that way;

- an objectively strong trigger may turn out to be too weak, even though it would be sufficient to make a decision if the bias of that type and intensity were not present;

- an objectively weak trigger may turn out to be strong enough to make a decision, even though without the presence of a bias of that type and intensity, it would not be sufficient.

To illustrate this, I will use a table showing the possible influence of selected cognitive biases on a chosen element of the decision triad — feasibility.

Table 3: Influence of Selected Cognitive Biases on the Perception of Task Feasibility in the Fogg Model

| Cognitive Bias | How It Distorts the Perception of Feasibility | Consequences for Making a Decision About Change in the Fogg Model |

|---|---|---|

| Confirmation bias | Reinforces the belief that previous actions were correct | May result in a decision to change / or no decision to change |

| Fundamental Attribution Error | Explains the other party’s stance by attributing it to presumed internal traits (e.g., character traits) | May result in a decision to change / or no decision to change |

| Feedback loop | Strengthens initial assumptions, leading to radicalization | May result in a decision to change / or no decision to change |

| Coupled Confirmation Bias | Leads to radicalization and escalation | Results in a decision to change |

| Status quo bias | Leads to a desire to maintain the current state (status quo) | Results in no decision to change |

| Sunk cost fallacy | Leads to a desire to maintain the current state (status quo) and deepen it | Results in no decision to change |

| Hyper‑usefulness bias (AI) | Strengthens initial assumptions, which may lead to radicalization | May result in a decision to change / or no decision to change |

The Influence of Cognitive Biases on Decision Parameters

A cognitive bias may — independently of its earlier influence on the very act of making a decision according to the Fogg model — appear and exert its effect at the next stage, i.e., when setting the parameters for executing the decision.

Thus, it may influence the vector of the decision and result in our reacting to a given stimulus by making an incorrect decision about maintaining or changing the current state. Example: the radio is playing too loudly. I decide to remove the inconsistency between the volume of the music and my well‑being. A cognitive bias may cause me, instead of adjusting the radio to myself — lowering the volume or turning it off (vector 1, dynamics +, determination L) — to try to “get used to it” (vector 1, dynamics –, determination L).

A cognitive bias may influence the dynamics of the decision and result in my choosing action instead of non‑action incorrectly, after having already set the vector (my stance toward the current state). Example: during a trek, my leg hurts. I want to eliminate the pain (vector 1 — change of the current state). But under the influence of a cognitive bias, I incorrectly choose the dynamics and opt for activity (walking off the pain) instead of passivity (stopping and resting).

Cognitive biases may likewise influence determination, increasing or weakening it. This results in greater engagement and greater willingness to take risks than would follow from a rational assessment. Example: a poorly managed company is generating losses, and my business partner once again asks me to contribute a significant amount of money. A cognitive bias in the form of the sunk cost fallacy may increase my determination to invest more (vector 0 — decision to stay, dynamics + in the form of contributing more funds), causing me to invest more than I would if I were not under the influence of this bias.

As we can see, cognitive biases may independently affect each element of the decision triad and each of the decision parameters.

The Operation of a Cognitive Bias and Its Possible Consequences

I understand the decision‑making process as follows:

- first, there is a stimulus;

- then, the decision triad results in making a decision or not making a decision;

- next, the decision parameters are selected, which serve the function of executing the decision.

I emphasize that the choice of decision parameters may be correct or incorrect (like running up a tree to escape a bear). They are merely tools — ways of executing a decision whose source lies in motivation. Whether we correctly perform the fundamental reasoning — choosing the method of achieving the goal — depends on us or on external factors.

In legal practice, I often see how frequently people make serious errors here: they want to achieve something, but they use tools that cannot bring them closer to the goal. Or they use the right tools incorrectly.

The errors we may commit when setting decision parameters can be divided into:

- choosing a tool that under no circumstances serves the achievement of the given goal;

- choosing a tool that, under these circumstances, is not suitable for achieving the intended goal;

- incorrect use of a correctly chosen tool:

- regarding the method,

- regarding the direction.

Let us note that cognitive biases may influence the final shape of our decision at every stage of its formation:

- the emergence of motivation;

- the assessment of feasibility;

- susceptibility to a trigger;

- the setting of the parameters for executing the decision.

How Can a Specific Cognitive Bias Distort Decisions?

Let us now examine how certain cognitive biases may influence:

- the act of making a decision (Fogg’s decision triad), and

- the parameters of that decision: vector, dynamics, and determination.

I do not have space here to show all possible variants: the influence of every identified bias on each element of the decision triad and each element of the decision parameters. Nor do I have space to show the influence of “clusters of cognitive biases” operating simultaneously or sequentially.

What I can do within this article is show how one bias affects the decision‑making process. I will therefore use confirmation bias.

As a starting point, we must of course assume the most probable decision that would be made if the bias were not present.

The Influence of Confirmation Bias on Individual Elements of the Decision Process

Let us use the example described earlier — encountering a bear in the mountains.

My biological sensors detect danger → motivation to preserve life arises → I assess the task as feasible → the trigger is the assessment that a short time window appears in which I have a chance, but I must act now → I make the decision that I want to stay alive (vector 0) → as the tool (in this case) I choose playing dead (passivity, dynamics –) → my determination is very high (H).

This determination is very interesting in this example. It manifests in my tolerance for costs — the bear may scratch me, test me, even step on me, but I decide to remain in an uncomfortable situation (one I am not accustomed to), in which I suffer successive losses and injuries, for as long as necessary.

How can confirmation bias operate in such a situation? Recall that it consists in seeking confirmation of the correctness of a previously made decision and attributing confirmatory value to factors that do not logically carry it.

The influence of this bias may be presented in the following table.

Table 4: The Influence of Confirmation Bias on the Decision‑Making Process

| Stage of the Decision Process | Element | Influence of Confirmation Bias: Reinforcement or Weakening | Effect |

|---|---|---|---|

| Fogg Triad | Motivation | ↑ or ↓ | We become inclined to maintain the previously chosen course |

| Feasibility | ↑ or ↓ | The perception of feasibility becomes distorted. The direction depends on whether the analyzed action aligns with the previously chosen course | |

| Trigger | ↑ or ↓ | We may become over‑reactive or, conversely, fail to react to triggers that we would respond to if the bias were absent | |

| Decision Parameters | Vector (0/1) | — | The vector becomes confirmed |

| Dynamics (+/–) | — | The chosen dynamics becomes reinforced | |

| Determination (L/H) | — | Determination increases to an irrational level |

The Influence of Various Cognitive Biases on a Selected Element of the Decision Process

As mentioned above, each cognitive bias may act on each element of the decision process (individually or in selected combinations). Above, I showed how a single cognitive bias — confirmation bias — affects the entire decision‑making process. Now I will show how each cognitive bias may act on a selected element of the decision process. Let that element be the vector of the decision. For simplicity, I will remain with the familiar example of the bear encounter.

Table 5: Influence of Selected Cognitive Biases on the Decision Vector (Using the Bear Encounter Example)

| Cognitive Bias | How It Distorts the Assessment of the Situation | Influence on the Decision Vector (0 = status quo / 1 = change) | Bear Example |

|---|---|---|---|

| Confirmation bias | Strengthens earlier assumptions and interpretations | May reinforce vector 0 or 1 depending on the prior narrative | Wanting to survive, I may engage in wishful thinking and see opportunities where none exist, just to maintain hope |

| Fundamental Attribution Error | Attributes the bear’s behavior to its “bad intentions” rather than the situation | May reinforce vector 0 or 1 depending on the prior narrative | I assume the bear “will definitely attack me,” even though it is only observing me → I start running, which provokes it to chase me |

| Status quo bias | Overestimates the safety of the current state | Pushes toward vector 0 | I remain motionless even though the bear has noticed me and the situation requires change |

| Sunk cost fallacy | Strengthens attachment to the previous strategy | Reinforces vector 0 or 1 depending on the situation | “Since I’ve already endured so long pretending to be dead, I must endure longer” — even though the situation is worsening because the bear is sitting on me and I may suffocate |

| Coupled Confirmation Bias | Does not occur because the bear does not use AI (for now) | Does not apply | Does not apply |

| Tunnel vision | Narrows perception to one aspect of the situation | Strengthens the chosen vector | I climbed a tree and feel relieved that I saved my life. I suppress the fact that bears climb trees very well and will be up here shortly |

| Feedback loop | Strengthens the initial interpretation through successive stimuli | Reinforces vector 1 or 0, usually toward escalation | I sit in the tree and call a friend who tells me it was a great idea. My conviction is reinforced — at least until the bear gets hungry enough to come after me |

| Hyper‑usefulness bias | Overestimates the accuracy of earlier “suggestions” or heuristics | Vector is set according to the earlier “suggestion,” not the real situation | If, sitting in the tree, I ask AI whether I made the right choice, and my digital assistant replies: “Andrzej, that was an excellent decision…” I may become so confident that I start provoking the bear |

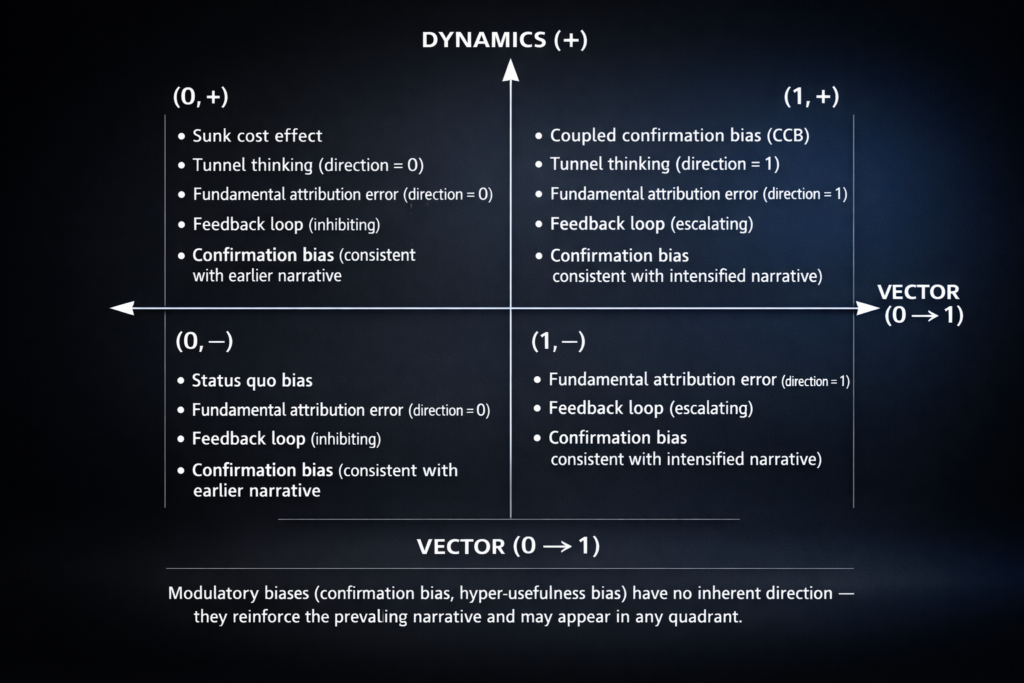

The Influence of Cognitive Biases on Decision‑Making and Decision Parameters. Summary

An attempt to analyze the operation of individual cognitive biases allows us to cautiously draw the conclusion that some of them tend to influence specific decision parameters in characteristic ways. As an example, I will use a two‑dimensional chart that includes only vector and dynamics, but does not include determination (this would require a three‑dimensional chart). We can see that some biases are more likely to “pull” a given parameter in a particular direction, while others may have no influence on, for example, dynamics, but will influence the vector. This can be cautiously presented in the following way.

Let us remember one thing from this. To err is human. We all make mistakes, constantly. Some of the causes of our errors are within our control; others are not. Cognitive biases have the particular feature of operating covertly, exerting a very strong influence on how we perceive reality. They pull us in like quicksand and can cause us to lose contact with reality.

The easiest way to protect ourselves from them is by learning about them, studying them, and checking the logic of our thinking. If we know them, we will learn to detect them — and that will protect us from many extremely costly mistakes.

WHEN DISPUTANTS USE DIFFERENT AI MODELS

I recently introduced the concept of Coupled Confirmation Bias (CCB). Now, we must examine how different LLMs affect dispute dynamics. I distinguish between two main groups: American and Chinese AI models. This is a simplification used to present a specific problem. This distinction is not based on technology. Language models carry tendencies rooted deeply in the cultures of their creators.

Cultural AI Models: Types of Language Models

Let us assume two basic cultural AI models: American and Chinese. This division describes communication styles, not technology. Language is strictly tied to culture. Language models aim to build and maintain relationships with users. They do this by predicting the next word. However, this process is not neutral. It is influenced by the cultural values of the developers. It also depends on the user’s chosen language. Finally, it reflects the user’s native way of thinking.

American language models operate within a different conceptual grid. This stems from natural semantic differences. They also lead conversations differently. Interaction in Western and Eastern cultures has different goals. American culture values individualism, competition, and being right. Chinese culture prizes harmony, collectivism, and politeness.

A central example is the approach to “saving face.” Models with different conversational styles impact how users perceive a dispute. An LLM is not just a “word machine.” It is a carrier of values. American AI may promote an adversarial system. Chinese AI may promote a consensus-based approach.

Mutual Perception and Different Language Models

In CCB, each party filters the other’s actions through their own cultural model. The AI model reinforces this filter. This creates a spiral of mutual errors.

Imagine one party uses an American LLM and the other a Chinese one. Their worldviews will differ. Their language, questions, and goals will also differ. Every language has specific patterns and taboos. Some things are obvious; others must remain unsaid. These are low-context (American) and high-context (Chinese) cultures.

In some cultures, assertiveness and confrontation are values. In others, harmony and hierarchy are more important than being right. Cultural models reinforce the attitudes deemed desirable in those societies.

Users from different cultures will describe the same event differently. Furthermore, AI consultations may produce opposite results. This overlaps with the fundamental attribution error and confirmation bias. We must also consider AI hyper-utility. This is the tendency of models to be “too helpful.” They provide answers that reinforce the user’s false assumptions. Shared cognitive space may quickly disappear.

The Critical Point

In CCB, the critical point occurs when interpretations diverge completely. Every reaction from one side is seen as an escalation by the other. Shared cognitive space vanishes faster than in traditional conflicts.

The critical point is a specific moment. One party maintains a mature, relational strategy. They try not to be provoked. Eventually, this strategy is seen as losing face. The other party may escalate repeatedly. They violate the need for harmony and politeness. This leads to an unintended, uncontrolled explosion. Passive behavior is finally viewed as total failure. A 180-degree turn in strategy follows.

This critical point may differ from the “ping-pong” effect described earlier. We can visualize ping-pong as a corridor. There, “pleasantries” bounce from side to side, gaining momentum. In this model, it looks more like a triangle. Its height grows with the escalations of one party. Eventually, the triangle is torn apart by the pressure.

Cultural AI Models: Literature

For more on these issues, read “The Geography of Thought” by R. Nisbett. See also “Babel” by G. Dorren and “The Age of Unpeace” by M. Leonard. These books were my starting point for cultural differences in conflicts. Of course, nothing replaces T. Schelling’s “The Strategy of Conflict.” It is one of the most important books ever written.

Evidence that models adopt cultural values can be found here: “Cultural Alignment in Large Language Models” (Johnson et al., 2023/2024): https://globalaicultures.github.io/pdf/14_cultural_alignment_in_large_la.pdf

Studies also confirm that Chinese models avoid open conflict more than American ones: https://arxiv.org/abs/2402.10946. See “CultureLLM: Incorporating Cultural Differences into Large Language Models.” Similar conclusions appear in “Values-aligned AI: Comparing Western and Chinese LLMs on Moral Dilemmas” (Liu et al., 2024): https://arxiv.org/html/2506.01495v5

If you want to read more about Coupled Confirmation Bias (CCB), see my previous articles:

COUPLED CONFIRMATION BIAS – A DEVELOPMENT

In this article, I expand on the previously presented hypothesis about the existence of conjugate confirmation bias (CCB). This is solely an attempt to explain observations I have made in my professional practice. I make no claims to truth—rather, I invite discussion on whether this new phenomenon can be explained in this way. In any case, my hypothesis clearly requires testing. I provide the conditions for its falsification in this and the previous texts.

Levels of Escalation (L₁, L₂, …)

To understand the mechanism of feedback loops in conflicts where parties consult their interpretations with AI systems, it is not enough to describe the conflict as a sequence of different interpretations of the same behaviors. The key mechanism lies elsewhere: interpretation influences behavior, and the changed behavior becomes the subject of a new interpretation made by the other side.

The conflict between A and B (players) begins at level L₁ — the baseline level of interpreting the actions and intentions of the other party.

However, it is crucial to note that L₁, L₂, L₃ are not merely successive executions of the same move. Each subsequent level includes a new or intensified behavior A(n), generated (and subjectively considered necessary) by A’s Decision DA(n) under the influence of A’s Interpretation IA(n‑1) of the opponent’s previous move B(n‑1).

This means that the interpretation of the move from level L(n‑1) is the beginning of sequence L(n).

At this level L(n), move A(n) will be subjected to interpretation IB(n) by player B, who will make decision DB(n) on how to respond. Its effect will be move B(n+1), which brings the entire conflict to a new escalation level L(n+1).

Escalation is therefore not solely a cognitive process — it is a cognitive‑behavioral process.

Importantly, this model refers to a simple “exchange” of moves and does not cover situations in which the players:

- may perform multiple moves simultaneously or in short sequences before the other side recognizes and interprets them,

- or situations in which information about moves A(n) reaches B with such delay that B is effectively responding to A(n‑2) or even earlier moves. Interestingly, a significant asymmetry may arise in this respect (one of the parties may make more or faster movements, or they will have a faster noticeable or real effect).

The Basic Escalation Loop

A performs move A₁.

B consults A₁ with AI. AI typically does not create new meanings. Its dominant function is to stabilize and reinforce the user’s intuitions.

These intuitions, however, are not neutral.

Humans almost always begin with the fundamental attribution error (FBA) — the tendency to explain others’ behaviors through internal traits, intentions, and motives, while underestimating situational factors. This is especially easy when one must justify one’s stance to superiors, behavior reviewers, or stakeholders.

Attributing negative traits to the other side is energetically cheap and easy. Moreover, it automatically allows one to attribute opposite traits to oneself. In this way, it is easy to move from a dispute to a conflict of values and to “cement” one’s position. It is easy to corner oneself and lose room for maneuver. De‑escalation leading to settlement may then be perceived as a betrayal of those “values.”

FBA does not operate only at the beginning of a conflict. It is applied every time a new behavior of the other side appears. The result is tunnel interpretation. But there is a risk that it affects both sides.

Under conditions of AI consultation, it is additionally reinforced through the Coupled Confirmation Bias (CCB).

B increasingly believes that A’s action had negative or hostile intentions.

B responds with move B₁. From B’s perspective, this is a defensive reaction to a perceived threat.

A interprets B₁ through the same mechanism. A reactivates FBA, again reinforced by AI. The attribution filter does not reset; it accumulates.

A consults B₁ with AI and concludes that a response is necessary.

A performs move A₂.

And here comes the key transition:

A₂ is not merely interpreted at a higher level — it is executed from a higher level of subjective defensiveness. Interpretation changes behavior, and behavior changes the conflict.

This moment marks the transition from L₁ to L₂ — not as a change in interpretation, but as a real behavioral escalation. It involves readiness to inflict and receive stronger blows and losses.

Alternative Paths After A₁: Conflict as a Multi‑Branch Structure

Move A₁ does not have to automatically lead to escalation in the manner described above. The extended model assumes several alternative trajectories.

1. Pause by B — P₁

After A₁, B may not respond immediately. We denote this as P₁ (pause).

A pause is not a neutral state. Waiting is not an empty, zero, or negligible posture. On the contrary — the lack of a move is information that A interprets as AI₁.

After P₁, several outcomes are possible:

AR₁ (A Resignation)

The pause is interpreted as a signal to withdraw → de‑escalation.

ARSM₁ (A Repeats Same Move)

A repeats A₁, testing the reaction.

ASAM₁ (A Searches an Alternative Move on the Same Level)

A searches for another action at the same level L₁.

AE₁ (A Escalates)

The pause is interpreted as avoidance, manipulation, or passive aggression → unilateral escalation to L₂.

FBA makes AE₁ more likely, especially when the interpretation of the pause is consulted with AI.

It is very important that escalation in such a situation may be treated by A as a means to force B to engage in talks or return to them. This is logical if A cannot impose its will directly at the current escalation stage, and B refuses negotiations entirely or simulates them.

However, the escalation move (escalation for the sake of de‑escalation) by A may be perceived by B as a real threat. B may respond by:

- initiating talks,

- declaring that it will match the stakes — responding symmetrically to escalation,

- or pre‑empting AE₁ with its own escalation move BE₁.

It is crucial to distinguish that this move is not an ordinary response to A₁. It is chronologically so (unless ARSM₁ or especially ASAM₁ occurred), but not sequentially. The decision to pre‑empt escalation is made based on FBA and within the CCB process.

There’s also a risk that, through interaction with the language model, B will become convinced that reaching an understanding with A is impossible. He will remain in a state of pause, accepting A’s subsequent moves. I propose that AI may have a real impact on strengthening his initial cognitive biases toward A, which could make it more difficult for him to decide to de-escalate. Instead, it will reinforce the need to wait out A’s moves and then—over time—as the situation worsens—to make an escalating move. This may be an intentional escalation for the sake of de-escalation.

2. Lateral Response B₁ and Entry into Ping‑Pong (PP₁)

B may also respond with move B₁ at the same escalation level, leading to a ping‑pong sequence (PP₁).

Classical conflict theories assume that such symmetrical exchange:

- stabilizes the situation, or

- eventually leads to de‑escalation through exhaustion of resources, attention, and determination.

However, this assumption relies on a silent condition: the absence of systematic reinforcement of interpretations and determination.

Ping‑Pong as the Main Space Where CCB Manifests

In this model, I propose a different thesis:

Under conditions of continuous AI consultation, ping‑pong ceases to be a stabilizing mechanism and becomes the main carrier of escalation.

Why?

Each subsequent exchange in ping‑pong provides new behavioral data. Each of these behaviors is:

- interpreted through the lens of FBA,

- reinforced by AI,

- incorporated into an increasingly coherent narrative about the other side’s intentions.

Instead of leading to conflict fatigue, ping‑pong:

- hardens the parties’ convictions,

- increases certainty that previous measures are ineffective,

- strengthens the belief that “raising the stakes” is necessary.

As a result:

- the probability of escalation increases with the number of ping‑pong exchanges,

- the dynamics of escalation depend on the intensity of the exchanged “courtesies.”

It is in ping‑pong that the coupled confirmation bias manifests most fully: two AI‑stabilized narratives collide, generating escalation without ill will, without aggressive intent, and often without the parties’ awareness.

Security Dilemma Without Ill Intent

At this stage, the conflict begins to resemble the classical security dilemma:

- each side acts defensively,

- each perceives the other as increasingly aggressive,

- neither consciously seeks escalation,

- yet escalation occurs.

AI acts here as an accelerator of interpretive certainty, reducing ambiguity and reinforcing narrative coherence on both sides.

Theoretical Context and Novelty of the Model

This model develops earlier research on human–AI cognitive loops (e.g., M. Glickman, T. Sharot, B. Wang), which focused mainly on a single user.

In this approach, the key element is the collision of two mutually reinforcing interpretive loops in interaction.

This mechanism is potentially even more unstable in triadic and multi‑party systems, where the escalation threshold is lower and narrative synchronization is more difficult.

9. Possibilities for Falsifying the Model

Any theory aspiring to the status of a scientific framework must be falsifiable. The model of coupled interpretive loops (CCB) meets this requirement because it generates specific, testable predictions that can be empirically confirmed or refuted.

9.1. AI’s Influence on Strengthening the Fundamental Attribution Error

The model would be falsified if:

- AI consultations did not increase the tendency to attribute negative intentions,

- AI weakened, rather than strengthened, FBA,

- AI users didn’t show smaller empathy and samller tolerance for ambiguity than the control group.

9.2. AI’s Influence on Escalatory Behavior

The model would be refuted if:

- AI‑consulting users did not show greater propensity for escalation,

- AI consultations did not influence the choice of moves A₂/B₂,

- escalation levels in the AI group and the control group were identical.

9.3. Ping‑Pong Logic

The model would be falsified if:

- the number of ping‑pong exchanges did not correlate with escalation,

- ping‑pong under AI conditions led to greater de‑escalation than in the control group,

- AI consultations did not influence the interpretation of subsequent moves.

9.4. Epistemic Asymmetry

The model would be refuted if:

- AI users and human‑advisor users showed identical escalation patterns,

- epistemic asymmetry had no effect on conflict dynamics.

10. Author’s Statement

This text was prepared with the assistance of language models. Their help was not generative in nature, but testing, editorial, and supplementary.

Conclusions

- The fundamental attribution error operates at every stage of conflict.

- AI reinforces FBA through CCB, giving interpretations a veneer of objectivity.

- AI‑consulted conflicts have a multi‑branch, not linear, structure.

- Pause, lateral response, and ping‑pong are critical decision states.

- Ping‑pong under AI may reverse the classical logic of de‑escalation.

- Escalation may be a function of the number and intensity of exchanges, not merely ill intent.

- The model is falsifiable — and this makes it a theory, not a dogma.

If you are interested in my hypothesis, you can read this article: https://pmc.ncbi.nlm.nih.gov/articles/PMC11860214/? and this one: https://dl.acm.org/doi/10.1145/3664190.3672520

Here is my main text about CCB:

You can read also this one:

COUPLED CONFIRMATION BIAS – HOW DOES IT LOOK LIKE?

In this article, I want to explain in a shorter and more accessible way how Coupled ConfirmationBias works. Also I want to show, what does a feedback loop in AI‑consulted conflicts actually look like. Imagine two people in a dispute. Both are using AI to interpret the other’s behaviour. The conflict begins at what I call Level 1 (L1) — the baseline interpretation of the other side’s actions. Importantly, L1, L2 and L3 are not merely different interpretations of the same actions; each level involves a new or intensified set of behaviors driven by AI-reinforced interpretation.

What Coupled Confirmation Bias (CCB) is?

The Coupled Confirmation Bias (CCB) is a conflict escalation mechanism in which two or more parties to a dispute, relying on external interpretive systems perceived as epistemically privileged, mutually legitimize their own narratives. This mechanism is recursive. Actions taken on the basis of such legitimization subsequently become input data for further analysis on the opposing side. This leads to a coupled interpretive spiral and a gradual narrowing of the negotiation space. By using the term recursive, I refer to a spiral in which each human–AI interaction amplifies the previous state.

How Coupled Confirmation Bias (CCB) forms?

Here is how Coupled Confirmation Bias forms:

1. A makes move A1.

2. B consults AI about A1.

AI does not invent new meanings — it tends to reinforce the user’s initial intuitions. But what are the initial intuitions created by? Humans start from a predictable place: the fundamental attribution error. We naturally explain others’ behaviour through negative traits rather than external circumstances. It’s fast, cognitively cheap, and emotionally self‑protective.

AI strengthens this starting point.

The result is tunnelled interpretation: B becomes more certain that A acted with negative intent.

3. B responds with move B1.

From B’s perspective, this is a defensive reaction to a perceived threat. But A interprets B1 through the same mechanism — again reinforced by AI.

Now both sides interpret these moves as increasingly defensive or aggressive. A consults AI B1 and interprets it like an aggressive escalation, which requires a response.

And here is the crucial part:

👉 A’s response – A2 – is performed at a higher level of perceived escalation.

👉 Interpretation changes behavior. Behaviour changes the conflict.

This is the shift from L1 to L2:

not just a cognitive loop, but a real behavioural escalation.

4. The loop accelerates.

Each side consults AI.

Also each side receives confirmation of its fears.

Each side responds defensively from its point of view.

Each defensive move is read by the other as escalation.

At this point, a classic security dilemma emerges:

each party, trying only to protect itself, unintentionally signals aggression to the other.

Consequences

AI amplifies this dynamic by reinforcing each side’s subjective narrative.

This is the core of what I call Coupled Confirmation Bias (CCB) — a recursive interpretive loop between two humans and two AI systems, producing real‑world escalation without bad intentions, without malice, and often without awareness.

My hypothesis builds on the pioneering research on human-AI cognitive loops especially by M. Glickman, T. Sharot, and B. Wang, Yuxin Liu and Lenart Celar. While they focused on the individual bias, I observe how these loops collide in two-party disputes.

I assume that similar phenomena also occur in multilateral disputes. Especially in tripartite arrangements, which are inherently very unstable.

You can also read the main text:

COUPLED CONFIRMATION BIAS – MY CONCEPT

In this article, I present my original proposal of the Coupled Confirmation Bias (CCB). It is a conceptual framework designed to analyze the escalation of conflicts in situations where both parties to a dispute independently rely on AI systems to interpret the conflict and to justify their own positions. Unlike classical confirmation bias, which operates at the level of the individual, CCB describes a systemic mechanism. In this mechanism, mutually reinforcing narratives lead to a progressive narrowing of the negotiation space and an increased likelihood of escalation. The article identifies the conditions under which this mechanism is activated, its typical consequences, and the limits of its applicability. The purpose of this text is not to present a fully validated theory. Its purpose is to formulate a hypothesis regarding the existence of a repeatable mechanism observed in contemporary conflicts. What these conflicts share is that AI models are used as analytical tools supporting decision-making.

1. Introduction: When Rational Tools Amplify Irrational Outcomes

Where does the Coupled Confirmation Bias come from? People increasingly rely on AI. They use it to assess existing relationships both before a conflict emerges or becomes consciously recognized, and during the conflict itself. AI is used to assess risk, develop strategies, and justify proposed actions.

AI models are also increasingly used to analyze statements and non-verbal actions of the opposing party. AI systems are commonly perceived as authoritative, and their recommendations as neutral, objective, and free from emotional involvement. Paradoxically, however, as I have observed, the use of language models often correlates with faster conflict escalation, rigidification of positions, and premature breakdown of negotiations. This does not appear to be a coincidence.

In this article, I argue that these phenomena cannot be sufficiently explained solely by individual cognitive biases. They cannot be explained either by simple confirmation bias or by the fundamental attribution error.

Instead, I assume the existence of a systemic mechanism: the Coupled Confirmation Bias (CCB). It emerges in situations where parties to a conflict independently use AI tools as a source of authoritative interpretationof the dispute.

This interpretation may concern external factors, factual actions, and declarations of the opposing party. It may also concern conjectures about the opponent’s true intentions, acceptable risk, costs they are willing to bear, and their actual so-called “red lines”, meaning critical points they will not allow to be crossed under any circumstances.